Context

Whenever you work on agentic applications you need to be able to navigate non-deterministic behavior by building against samples of positive data. This lets you test whether your application works as expected under known scenarios.

This is harder than it sounds. Your initial dataset may not cover cases you encounter in the wild, and it certainly won’t cover unexpected edge cases you didn’t think to consider — especially as your feature set evolves. Over time you find yourself relying on manual testing or mocks, both of which can give you a false sense of confidence. Then a bug surfaces in production and you realize your test coverage had a blind spot.

Worse, as your application undergoes significant changes, it’s entirely possible to introduce regressions without knowing it. A change that improves one behavior might quietly degrade another.

Properly addressing these problems requires both a proactive and reactive approach to testing your agentic application so that what you ship stays in line with your product spec. The rest of this post lays out a practical architecture for doing exactly that.

LLM-as-Judge Pattern

To write a test against any agentic functionality, you need a consistent input and a way to assert whether a produced output aligns with your expectations. The problem is that LLM output is non-deterministic — you’re not guaranteed to receive the same result even when you pass the same input.

To overcome that variance, we can test LLM output by leveraging another LLM to evaluate it. This second LLM is called a Judge, and you control exactly what it measures when deciding how well an output performed across a set of criteria.

In practice, you’d specify that the Judge should base its evaluation on a specific rubric, ground it against a general example (few-shot prompting), then further ground it against a test-case-specific expected output. You’d prompt it to produce structured output — a final grade alongside chain-of-thought reasoning for how it arrived at that grade.

The LLM-as-Judge pattern lets you consistently construct test cases against crafted scenarios while maintaining control over both inputs and expected outputs. It bridges the gap between the deterministic world of traditional unit tests and the probabilistic reality of LLM systems.

Offline Evaluation

LLMs hallucinate, but how often and under what conditions they hallucinate is what determines whether a new feature is functional or whether a production deployment has regressed. Testing agentic functionality can happen at different granularities:

- Node/stage level: if you’re using a graph, test individual nodes; if you’re using a pipeline, test individual stages

- Black-box level: test the graph or pipeline end-to-end without worrying about its internal topology/layout.

Regardless of the level you’re testing at, the structure is the same: a controlled input, an expected output sample, and a properly defined LLM-as-Judge. Each test run executes a given test case multiple times to produce a probability-value (p-value) — this tells you whether a failure is a persistent, high-prominence bug worth addressing or something that occurs occasionally and isn’t worth prioritizing.

Combining these into a CI pipeline or local test run gives you a full test suite for your LLM functionality with clear pass/fail stages.

Most test case authoring is proactive — you construct product-defined test scenarios up front. But it can also be reactive: bugs caught during QA can be added as new cases. Either way, this is offline evaluation because the test cases are sourced entirely through internal processes. There’s still more reactive data we can leverage: live production data.

Langfuse

Before we get to online evaluations, we need observability infrastructure in place. Langfuse is an open-source LLM observability and evaluation platform. Think of it as your APM (application performance monitoring) layer for LLM applications.

What it does: Langfuse gives you structured tracing for LLM applications. You instrument your code with the Langfuse SDK and it captures every call — prompts sent, completions received, tool calls, retrieval steps — organized into traces (a full request lifecycle) and spans (individual steps within that lifecycle). Alongside the raw I/O, it tracks latency and token cost at every level, so you can see exactly where time and money are being spent.

What you get access to:

- Traces and spans: the full execution path for every request, with inputs, outputs, and metadata at each step

- Scores: numeric or categorical evaluations attached to traces — can be written programmatically by an evaluator or manually by a human annotator

- Session views: grouped traces across a multi-turn conversation

- Dashboards: aggregate metrics over time — error rates, latency distributions, cost trends, score distributions

- Annotation queues: a workflow for human reviewers to label traces and validate evaluations

Deployment options: Langfuse is available as a cloud-hosted service at langfuse.com, or you can self-host it using Docker or Kubernetes. Self-hosting is a common choice for teams with data residency requirements.

Who uses it: engineers instrument it (typically a few lines of SDK setup); QA and product teams consume the dashboards, annotation queues, and evaluation results without needing to touch the code.

Additional features worth knowing about:

- Prompt management: version and deploy prompts from the Langfuse UI, decouple prompt changes from code deploys

- Dataset management: maintain your golden evaluation dataset directly within Langfuse

- Playground: test prompts against live model APIs from within the Langfuse UI

- Integrations: first-class support for LangChain, LlamaIndex, OpenAI SDK, and more via drop-in wrappers

Online Evaluations

With tracing in place, we now have access to the full picture of how our agent is interacting with real user input in production — most importantly, what outputs it produces for a given input. This is the foundation for online evaluation.

For our offline eval suite, the flow was:

- Internally defined test cases (supplied inputs)

- Internally defined expectations (asserted outputs)

- An LLM-as-Judge measuring alignment to expected outputs

An online eval suite replaces step 1 with real-world production traces, and uses those live inputs and outputs to drive improvement on steps 2 and 3 as well.

Having access to live traces also gives us additional dimensions beyond raw I/O — latency, token cost, session context. We can test against these deterministically (e.g., `token_cost < threshold`) or non-deterministically via an LLM-as-Judge (e.g., “does this output align with the expected behavior?” or “does this output meet the product’s quality bar?”).

The results of these evaluations become scores — each score tells you how well your agent performed against a specific criterion. Langfuse lets you attach these scores directly to their corresponding traces, so you can filter, sort, and investigate by score in the UI.

The complete mental model:

- Live traces from your observability platform (Langfuse)

- A set of scores you care about, each representing a quality dimension

- Evaluators — deterministic or LLM-based — that generate those scores against sampled traces

Here’s what a concrete implementation of this looks like.

scores_config.yml This file is the single source of truth for what dimensions we evaluate. Everything — evaluators, Langfuse score configs, and the runner — references this file.

scores: - name: answer_relevance data_type: NUMERIC description: "How relevant is the answer to the user's question? 0 = completely irrelevant, 1 = perfectly relevant." min_value: 0.0 max_value: 1.0 - name: faithfulness data_type: NUMERIC description: "Does the answer accurately reflect the retrieved context without hallucination? 0 = significant hallucination, 1 = fully grounded." min_value: 0.0 max_value: 1.0 - name: tone_quality data_type: CATEGORICAL description: "The tone and communication quality of the response." categories: - value: 0 label: poor - value: 1 label: acceptable - value: 2 label: excellent

sync_scores.py This script reads scores_config.yml and ensures Langfuse has matching score configurations. Run it before any evaluation job to keep Langfuse in sync.

import yamlfrom langfuse import Langfusefrom langfuse.api import ConfigCategorydef load_scores_config(path: str = "scores_config.yml") -> dict: with open(path) as f: return yaml.safe_load(f)def _existing_score_config_names(langfuse: Langfuse) -> set[str]: """Page through all existing score configs and collect their names.""" names: set[str] = set() page = 1 while True: response = langfuse.api.score_configs.get(page=page, limit=100) names.update(sc.name for sc in response.data) if len(response.data) < 100: break page += 1 return namesdef sync_scores(langfuse: Langfuse, config_path: str = "scores_config.yml") -> None: config = load_scores_config(config_path) existing = _existing_score_config_names(langfuse) for score in config["scores"]: if score["name"] in existing: print(f"Score '{score['name']}' already exists, skipping.") continue kwargs = { "name": score["name"], "data_type": score["data_type"], "description": score.get("description", ""), } if score["data_type"] == "NUMERIC": kwargs["min_value"] = score.get("min_value", 0.0) kwargs["max_value"] = score.get("max_value", 1.0) elif score["data_type"] == "CATEGORICAL": kwargs["categories"] = [ ConfigCategory(label=cat["label"], value=cat["value"]) for cat in score.get("categories", []) ] langfuse.api.score_configs.create(**kwargs) print(f"Created score config: {score['name']}")if __name__ == "__main__": client = Langfuse() sync_scores(client)

sample.py A small utility module for fetching traces from Langfuse. Keeping sampling logic separate makes it easy to swap strategies (e.g., random sampling, recency-based, failure-filtered) without touching the runner.

from datetime import datetime, timedelta, timezonefrom typing import Optionalfrom langfuse import Langfusefrom langfuse.api import TraceWithDetailsdef fetch_recent_traces( langfuse: Langfuse, hours: int = 24, limit: int = 50, tags: Optional[list[str]] = None,) -> list[TraceWithDetails]: """ Fetch traces from the last `hours` hours, up to `limit` results. Optionally filter to traces that carry specific tags. """ from_timestamp = datetime.now(tz=timezone.utc) - timedelta(hours=hours) kwargs = { "from_timestamp": from_timestamp, "limit": limit, } if tags: kwargs["tags"] = tags return langfuse.api.trace.list(**kwargs).datadef fetch_traces_for_evaluation( langfuse: Langfuse, hours: int = 24, limit: int = 50,) -> list[TraceWithDetails]: """ Fetch traces suitable for evaluation. Extend this with additional filters (e.g. excluding traces tagged `evaluated`) as your sampling strategy evolves. """ return fetch_recent_traces( langfuse=langfuse, hours=hours, limit=limit, )

evaluators.py The evaluator registry. Each evaluator corresponds to a named score in scores_config.yml. The register_evaluator decorator validates that pairing at import time and adds the function to a shared registry that the runner uses.

import jsonfrom functools import wrapsfrom typing import Callable, Optionalimport anthropicimport yamlfrom langfuse.api import TraceWithDetailsEVALUATOR_REGISTRY: dict[str, Callable] = {}_SCORE_NAMES: Optional[set[str]] = Nonedef _load_score_names(config_path: str = "scores_config.yml") -> set[str]: global _SCORE_NAMES if _SCORE_NAMES is None: with open(config_path) as f: config = yaml.safe_load(f) _SCORE_NAMES = {s["name"] for s in config["scores"]} return _SCORE_NAMESdef register_evaluator(func: Callable) -> Callable: """ Decorator that registers an evaluator function into the global registry. The function must be named `evaluate_{score_name}` where `score_name` matches an entry in scores_config.yml. """ prefix = "evaluate_" if not func.__name__.startswith(prefix): raise ValueError( f"Evaluator '{func.__name__}' must be named 'evaluate_<score_name>'." ) score_name = func.__name__[len(prefix):] score_names = _load_score_names() if score_name not in score_names: raise ValueError( f"No score named '{score_name}' found in scores_config.yml. " f"Available scores: {sorted(score_names)}" ) EVALUATOR_REGISTRY[score_name] = func wraps(func) def wrapper(*args, **kwargs): return func(*args, **kwargs) return wrapperdef _get_trace_text(trace: TraceWithDetails) -> tuple[str, str]: """Extract user input and agent output from a trace.""" user_input = str(trace.input) if trace.input else "" agent_output = str(trace.output) if trace.output else "" return user_input, agent_outputregister_evaluatordef evaluate_answer_relevance(trace: TraceWithDetails) -> float: """ Uses Claude as a judge to score how relevant the agent's answer is to the user's question. Returns a float in [0, 1]. """ user_input, agent_output = _get_trace_text(trace) if not user_input or not agent_output: return 0.0 client = anthropic.Anthropic() prompt = f"""You are an evaluation judge. Score the relevance of the following answer to the given question.Question: {user_input}Answer: {agent_output}Score the answer on a scale from 0.0 to 1.0 where:- 0.0 means the answer is completely irrelevant to the question- 0.5 means the answer is partially relevant but misses key aspects- 1.0 means the answer directly and completely addresses the questionRespond with a JSON object in exactly this format:{{"score": <float between 0.0 and 1.0>, "reasoning": "<one sentence explanation>"}}""" message = client.messages.create( model="claude-sonnet-4-5", max_tokens=256, messages=[{"role": "user", "content": prompt}], ) result = json.loads(message.content[0].text) return float(result["score"])register_evaluatordef evaluate_faithfulness(trace: TraceWithDetails) -> float: """ Uses Claude as a judge to score whether the agent's output is grounded in retrieved context without hallucinating. Returns a float in [0, 1]. In a real RAG pipeline the retrieved context lives on a child span — pull it from `trace.observations` (or the metadata you set when instrumenting retrieval) and pass it in below. The trace input is used here as a placeholder so the example stays self-contained. """ user_input, agent_output = _get_trace_text(trace) if not user_input or not agent_output: return 0.0 retrieved_context = user_input client = anthropic.Anthropic() prompt = f"""You are an evaluation judge assessing factual faithfulness.Retrieved context:{retrieved_context}Agent response:{agent_output}Score how faithfully the response is grounded in the provided context on a scale of 0.0 to 1.0:- 0.0 means the response contains significant claims not supported by (or contradicting) the context- 0.5 means the response is mostly grounded but includes some unsupported claims- 1.0 means every claim in the response is directly supported by the contextRespond with a JSON object in exactly this format:{{"score": <float between 0.0 and 1.0>, "reasoning": "<one sentence explanation>"}}""" message = client.messages.create( model="claude-sonnet-4-5", max_tokens=256, messages=[{"role": "user", "content": prompt}], ) result = json.loads(message.content[0].text) return float(result["score"])

run.py The entry point. It syncs score configs, samples traces, runs each evaluator, and posts results back to Langfuse.

from langfuse import Langfusefrom evaluators import EVALUATOR_REGISTRYfrom sample import fetch_traces_for_evaluationfrom sync_scores import sync_scoresdef run_evaluations(hours: int = 24, trace_limit: int = 50) -> None: langfuse = Langfuse() print("Syncing score configs...") sync_scores(langfuse) print(f"Fetching traces from the last {hours} hours...") traces = fetch_traces_for_evaluation(langfuse, hours=hours, limit=trace_limit) print(f"Found {len(traces)} traces to evaluate.") for trace in traces: for score_name, evaluator in EVALUATOR_REGISTRY.items(): try: score_value = evaluator(trace) langfuse.create_score( trace_id=trace.id, name=score_name, value=score_value, data_type="NUMERIC", ) print(f" [{trace.id[:8]}] {score_name} = {score_value:.3f}") except Exception as e: print(f" [{trace.id[:8]}] {score_name} failed: {e}") langfuse.flush() print("Done.")if __name__ == "__main__": run_evaluations()

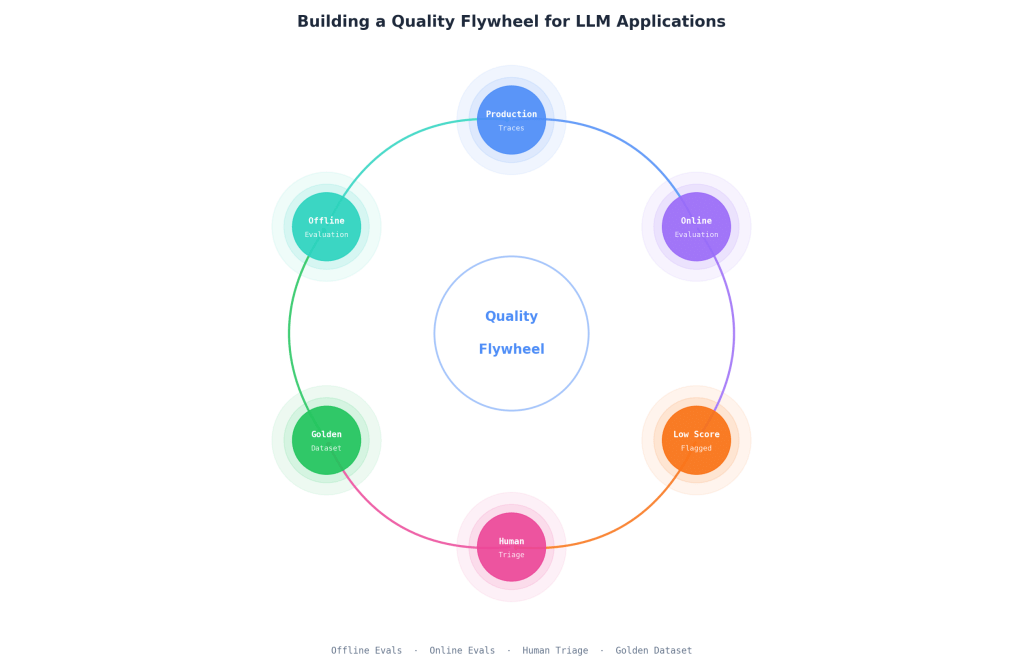

Quality Flywheel

So far we’ve discussed two distinct evaluation strategies: an offline suite that requires hand-crafted test cases, and an online suite that sources cases from sampled live data. The real leverage comes from connecting them.

The idea is simple: traces that score poorly in our online evaluations become candidates for our offline evaluation suite. If production is surfacing failures we didn’t anticipate, those failures are exactly what we want as new test cases. This closes the loop — the system becomes self-improving over time.

To avoid flooding the offline suite with false positives, we add a human-in-the-loop triage step. Low-scoring traces get flagged automatically, a human reviewer inspects them in Langfuse’s annotation queue, and only verified failures graduate into the golden dataset.

Here’s what that looks like as an update to run.py:

from langfuse import Langfusefrom evaluators import EVALUATOR_REGISTRYfrom sample import fetch_traces_for_evaluationfrom sync_scores import sync_scores# Score thresholds below which a trace is flagged for human review.# These should be tuned based on observed score distributions.REVIEW_THRESHOLDS: dict[str, float] = { "answer_relevance": 0.5, "faithfulness": 0.6,}REVIEW_SCORE_NAME = "needs_review"def flag_for_review(langfuse: Langfuse, trace_id: str, reason: str) -> None: """ Attach a `needs_review` score to the trace so reviewers can filter for it in Langfuse's annotation queue. The Langfuse v4 SDK does not expose a way to mutate trace tags after the fact, so we use a categorical score as the idiomatic flagging mechanism — the comment carries the reason. """ langfuse.create_score( trace_id=trace_id, name=REVIEW_SCORE_NAME, value="true", data_type="CATEGORICAL", comment=reason, )def run_evaluations(hours: int = 24, trace_limit: int = 50) -> None: langfuse = Langfuse() print("Syncing score configs...") sync_scores(langfuse) print(f"Fetching traces from the last {hours} hours...") traces = fetch_traces_for_evaluation(langfuse, hours=hours, limit=trace_limit) print(f"Found {len(traces)} traces to evaluate.") for trace in traces: low_scores: list[str] = [] for score_name, evaluator in EVALUATOR_REGISTRY.items(): try: score_value = evaluator(trace) langfuse.create_score( trace_id=trace.id, name=score_name, value=score_value, data_type="NUMERIC", ) print(f" [{trace.id[:8]}] {score_name} = {score_value:.3f}") threshold = REVIEW_THRESHOLDS.get(score_name) if threshold is not None and score_value < threshold: low_scores.append(f"{score_name}={score_value:.3f} (threshold {threshold})") except Exception as e: print(f" [{trace.id[:8]}] {score_name} failed: {e}") if low_scores: reason = "Low scores: " + ", ".join(low_scores) flag_for_review(langfuse, trace.id, reason) print(f" [{trace.id[:8]}] Flagged for human review — {reason}") langfuse.flush() print("Done.")if __name__ == "__main__": run_evaluations()

Traces flagged with the needs_review score show up in Langfuse where a human reviewer (filtering by that score) can mark them as either a genuine failure worth addressing or a false positive to dismiss. Verified failures get added to the offline eval suite’s golden dataset.

As your dataset grows, you’ll want to curate and maintain it — understanding what’s worth keeping versus what’s just noise, and pruning redundant cases to keep evaluation costs manageable =)

Conclusion

What we’ve built here is a quality system that improves on two axes simultaneously. The offline evaluation suite gives you a controlled, repeatable baseline — a set of known scenarios with expected outputs, versioned alongside your code, runnable in CI. The online evaluation suite gives you a live signal from real production behavior, surfacing the failure modes you didn’t think to test for.

The flywheel is what connects them. When production surfaces a failure, you don’t just fix it and move on — you add it to the golden dataset so it can never silently regress. Over time your test suite becomes a direct record of every production failure your system has ever had, and your confidence in deployments compounds accordingly. The human triage step keeps the dataset clean, ensuring that what makes it into the offline suite is signal, not noise.

This is a living system. The value isn’t in any single evaluation run — it’s in the accumulation. A dataset that starts at 20 hand-crafted cases and grows to 200 production-sourced cases over a few months is a fundamentally different artifact: it represents real user behavior, real edge cases, and real failures. That’s something no amount of upfront test case authoring can fully replicate. Build the infrastructure early, keep the loop tight, and let it compound.

Alternatives

LLM-as-Judge Alternatives

LLM-as-Judge is the most flexible approach, but it’s not the only one — and for some criteria, simpler alternatives will serve you better.

Deterministic / rule-based evaluation — regex matching, JSON schema validation, keyword checks, constraint assertions. Fast, cheap, zero model cost, and perfectly reproducible. The limitation is that rule-based checks can’t handle semantic evaluation: they can tell you whether an output is valid JSON, but not whether it actually answers the question. Use these for structural and format assertions; use LLM judges for quality assertions.

Embedding similarity — encode both the actual output and the expected output as vectors and measure cosine similarity. Cheaper than a second LLM call and works well for paraphrase-level equivalence. The weakness is precision: embedding similarity captures semantic closeness but not factual correctness. Two outputs can be topically similar but one can hallucinate facts the other doesn’t — embeddings won’t catch that.

Human evaluation — the gold standard. Human raters produce ground-truth labels that no automated method can fully replicate, and they’re essential for calibrating your LLM judge (you need to know your judge agrees with humans before you trust it). The obvious constraint is that human evaluation doesn’t scale to production volumes. The practical pattern is to use human evaluation to validate and calibrate your automated judges, then let the automated judges run at scale.

Langfuse Alternatives

LangSmith (by LangChain) — polished UI, tight integration with the LangChain ecosystem, and a solid evaluation and dataset management story. The trade-off is that its strongest value shows when you’re already using LangChain. If your codebase is framework-agnostic or uses another orchestration layer, the integration feels more forced than it should.

Arize Phoenix — open-source, OpenTelemetry-native, and a natural fit for teams already operating in the ML observability space. The OpenTelemetry-first approach means it composes well with existing observability infrastructure. The evaluation tooling is less mature than Langfuse’s, and the LLM-specific features (annotation queues, prompt management) are less developed.

Weights & Biases Weave — if your team already uses W&B for experiment tracking, Weave adds LLM tracing alongside your ML workflows in a single platform. The downside is that it’s a heavier platform than you need if your primary concern is LLM observability, and it isn’t designed LLM-first the way Langfuse is.

Built-Out Third-Party Solutions

If you’d rather not wire all of this together yourself, there are higher-level frameworks that provide evaluation capabilities out of the box.

RAGAS — purpose-built for RAG pipeline evaluation. It ships with pre-built metrics for faithfulness, context recall, answer relevance, and others that map directly to RAG-specific quality dimensions. The trade-off is scope: RAGAS is excellent for RAG and not particularly suited for general agentic flows with tool calls, multi-step reasoning, or non-retrieval outputs.

DeepEval — a pytest-style LLM evaluation framework with an extensive library of built-in metrics including G-Eval, hallucination detection, and contextual precision. CI integration is straightforward. The trade-off is that the abstraction layer buys convenience at the cost of control — when you need to understand exactly what a metric is measuring (or when it’s wrong), you’re navigating someone else’s prompt engineering rather than your own.

PromptFoo — CLI-first LLM regression testing, excellent for prompt-level evaluation across multiple providers. If your evaluation needs are primarily at the prompt level — comparing output quality across model versions, testing prompt changes — PromptFoo is the fastest way to get there. It’s less suited for complex multi-step agentic flows where you need nested trace context or cross-step evaluation.

The general pattern across all three: they get you to working evaluations faster, but they cap your ceiling. For early-stage projects or pure RAG pipelines, the trade-off often makes sense. For complex agents with evolving product requirements, you’ll likely want the control that comes with building your own evaluation layer on top of an observability platform like Langfuse.

Topics Worth Reading Into

- Structured outputs and constrained decoding — reducing non-determinism at the source rather than compensating for it downstream. Worth understanding before your evaluation infrastructure gets too complex. Start with OpenAI’s Structured Outputs guide and the Outlines library for the constrained-decoding side.

- Fine-tuning on your golden dataset — once your offline eval suite has grown large enough, your golden dataset is also a supervised fine-tuning dataset. This is where the flywheel becomes a training loop. OpenAI’s fine-tuning docs.

- Red-teaming and adversarial testing for agents — systematically probing your agent for failure modes rather than waiting for production to find them. Complements the reactive side of the flywheel. Anthropic’s Challenges in red teaming AI systems and the PyRIT framework are good starting points.

- Multi-agent evaluation strategies — when failures propagate across agent handoffs, attribution gets hard. How do you evaluate a pipeline where Agent A produces context that Agent B uses to produce the final output? Anthropic’s Building effective agents and the τ-bench paper are useful frames here.

- SWE-bench and GAIA — reference benchmarks for agent evaluation. Worth studying not just for the scores, but for the methodology: how the tasks are designed, how success is defined, and what the benchmarks deliberately exclude. They’re useful calibration points for how hard “good” agent evaluation actually is. See SWE-bench and the GAIA paper.

Leave a comment